This guide demonstrates how to configure comprehensive monitoring for Elasticsearch clusters in KubeBlocks using:

Before proceeding, ensure the following:

kubectl create ns demo

namespace/demo created

Deploy the kube-prometheus-stack using Helm:

helm repo add prometheus-community https://prometheus-community.github.io/helm-charts

helm install prometheus prometheus-community/kube-prometheus-stack \

-n monitoring \

--create-namespace

Check all components are running:

kubectl get pods -n monitoring

Example Output:

NAME READY STATUS RESTARTS AGE

alertmanager-prometheus-kube-prometheus-alertmanager-0 2/2 Running 0 114s

prometheus-grafana-75bb7d6986-9zfkx 3/3 Running 0 2m

prometheus-kube-prometheus-operator-7986c9475-wkvlk 1/1 Running 0 2m

prometheus-kube-state-metrics-645c667b6-2s4qx 1/1 Running 0 2m

prometheus-prometheus-kube-prometheus-prometheus-0 2/2 Running 0 114s

prometheus-prometheus-node-exporter-47kf6 1/1 Running 0 2m1s

prometheus-prometheus-node-exporter-6ntsl 1/1 Running 0 2m1s

prometheus-prometheus-node-exporter-gvtxs 1/1 Running 0 2m1s

prometheus-prometheus-node-exporter-jmxg8 1/1 Running 0 2m1s

KubeBlocks uses a declarative approach for managing Elasticsearch Clusters. Below is an example configuration for deploying a Elasticsearch Cluster with create a cluster with replicas for different roles.

Apply the following YAML configuration to deploy the cluster:

apiVersion: apps.kubeblocks.io/v1

kind: Cluster

metadata:

name: es-multinode

namespace: demo

spec:

terminationPolicy: Delete

componentSpecs:

- name: data

componentDef: elasticsearch-data-8

serviceVersion: 8.8.2

configs:

- name: es-cm

variables:

version: 8.8.2

replicas: 3

resources:

limits:

cpu: "1"

memory: "2Gi"

requests:

cpu: "1"

memory: "2Gi"

volumeClaimTemplates:

- name: data

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 20Gi

- name: master

componentDef: elasticsearch-master-8

serviceVersion: 8.8.2

configs:

- name: es-cm

variables:

version: 8.8.2

replicas: 3

resources:

limits:

cpu: "1"

memory: "2Gi"

requests:

cpu: "1"

memory: "2Gi"

volumeClaimTemplates:

- name: data

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 20Gi

Monitor the cluster status until it transitions to the Running state:

kubectl get cluster es-multinode -n demo -w

Example Output:

NAME CLUSTER-DEFINITION TERMINATION-POLICY STATUS AGE

es-multinode Delete Creating 10s

es-multinode Delete Updating 41s

es-multinode Delete Running 42s

Check the pod status and roles:

kubectl get pods -l app.kubernetes.io/instance=es-multinode -n demo

Example Output:

NAME READY STATUS RESTARTS AGE

es-multinode-data-0 4/4 Running 0 6m21s

es-multinode-data-1 4/4 Running 0 6m21s

es-multinode-data-2 4/4 Running 0 6m21s

es-multinode-master-0 4/4 Running 0 6m21s

es-multinode-master-1 4/4 Running 0 6m21s

es-multinode-master-2 4/4 Running 0 6m21s

Once the cluster status becomes Running, your Elasticsearch cluster is ready for use.

If you are creating the cluster for the very first time, it may take some time to pull images before running.

kubectl -n demo exec -it pods/es-multinode-dit-0 -- \

curl -s http://127.0.0.1:9114/metrics | head -n 50

kubectl -n demo exec -it pods/es-multinode-master-0 -- \

curl -s http://127.0.0.1:9114/metrics | head -n 50

apiVersion: monitoring.coreos.com/v1

kind: PodMonitor

metadata:

name: elasticsearch-jmx-pod-monitor

namespace: demo

labels: # match labels in `prometheus.spec.podMonitorSelector`

release: prometheus

spec:

jobLabel: app.kubernetes.io/managed-by

podMetricsEndpoints:

- path: /metrics

port: metrics

scheme: http

namespaceSelector:

matchNames:

- demo

selector:

matchLabels:

app.kubernetes.io/instance: es-multinode

PodMonitor Configuration Guide

| Parameter | Required | Description |

|---|---|---|

port | Yes | Must match exporter port name ('http-metrics') |

namespaceSelector | Yes | Targets namespace where Elasticsearch runs |

labels | Yes | Must match Prometheus's podMonitorSelector |

path | No | Metrics endpoint path (default: /metrics) |

interval | No | Scraping interval (default: 30s) |

Forward and access Prometheus UI:

kubectl port-forward svc/prometheus-kube-prometheus-prometheus -n monitoring 9090:9090

Open your browser and navigate to: http://localhost:9090/targets

Check if there is a scrape job corresponding to the PodMonitor (the job name is 'demo/es-multinode-pod-monitor').

Expected State:

podTargetLabels (e.g., 'app_kubernetes_io_instance').Verify metrics are being scraped:

curl -sG "http://localhost:9090/api/v1/query" --data-urlencode 'query=elasticsearch_clusterinfo_up{job="kubeblocks"}' | jq

Example Output:

{

"status": "success",

"data": {

"resultType": "vector",

"result": [

{

"metric": {

"__name__": "elasticsearch_clusterinfo_up",

"container": "exporter",

"endpoint": "metrics",

"instance": "10.244.0.49:9114",

"job": "kubeblocks",

"namespace": "demo",

"pod": "es-multinode-master-2",

"url": "http://localhost:9200"

},

"value": [

1747666760.443,

"1"

]

},

... // more lines ommited

Port-forward and login:

kubectl port-forward svc/prometheus-grafana -n monitoring 3000:80

Open your browser and navigate to http://localhost:3000. Use the default credentials to log in:

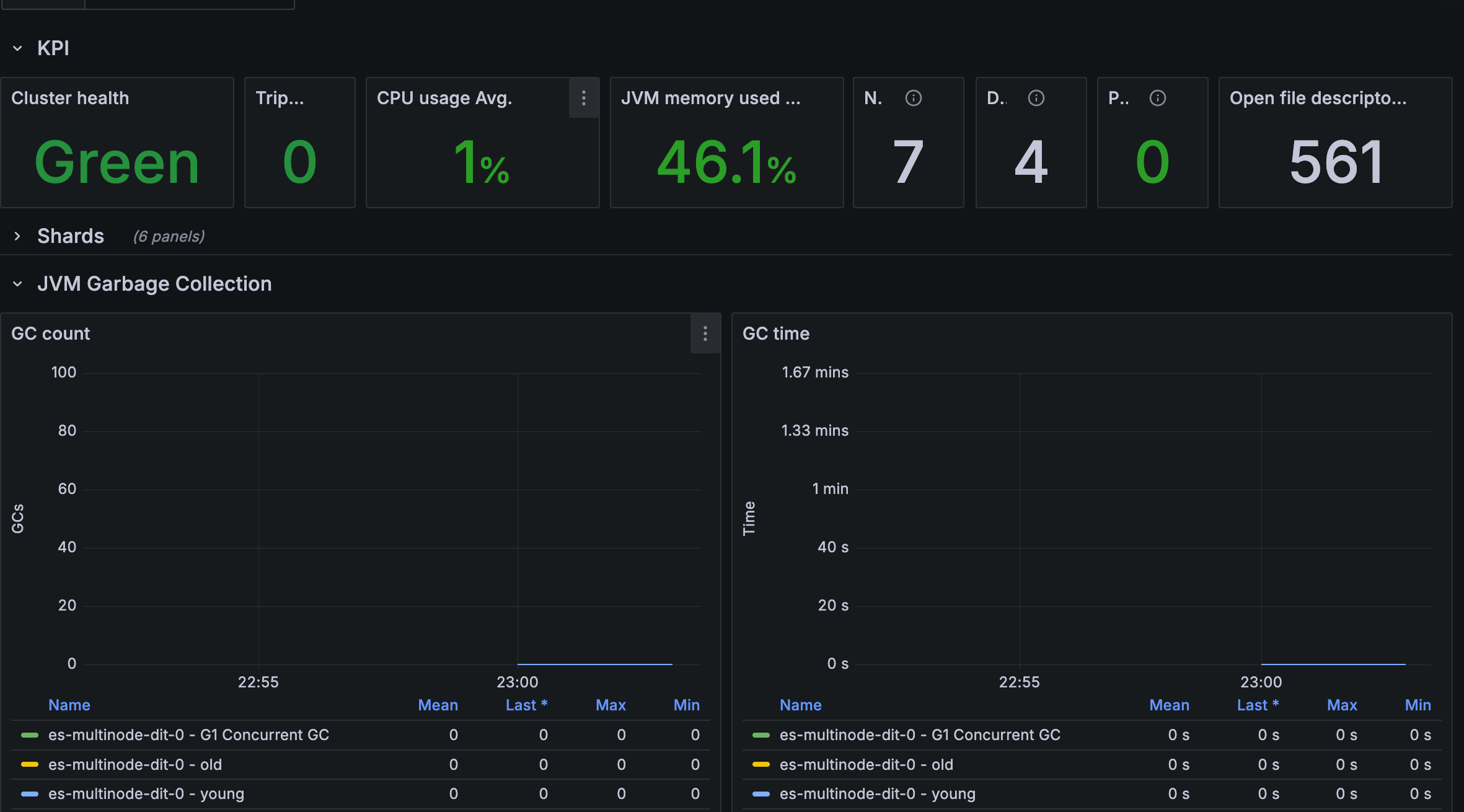

Import the KubeBlocks Elasticsearch dashboard:

Figure 1. Elasticsearch dashboard

Figure 1. Elasticsearch dashboard

To delete all the created resources, run the following commands:

kubectl delete cluster es-multinode -n demo

kubectl delete ns demo

kubectl delete podmonitor es-multinode-pod-monitor -n demo

In this tutorial, we set up observability for a Elasticsearch cluster in KubeBlocks using the Prometheus Operator.

By configuring a PodMonitor, we enabled Prometheus to scrape metrics from the Elasticsearch exporter.

Finally, we visualized these metrics in Grafana. This setup provides valuable insights for monitoring the health and performance of your Elasticsearch databases.